8.57 Evaluate the model

… and we take a look at the testing performance (notice an improvement over the decision tree).

augment(bagging_fit, new_data = carseats_train) %>%

rmse(truth = Sales, estimate = .pred)## # A tibble: 1 × 3

## .metric .estimator .estimate

## <chr> <chr> <dbl>

## 1 rmse standard 0.671augment(bagging_fit, new_data = carseats_test) %>%

rmse(truth = Sales, estimate = .pred)## # A tibble: 1 × 3

## .metric .estimator .estimate

## <chr> <chr> <dbl>

## 1 rmse standard 1.35Training RMSE: 0.671 Testing RMSE: 1.35 (overfit)

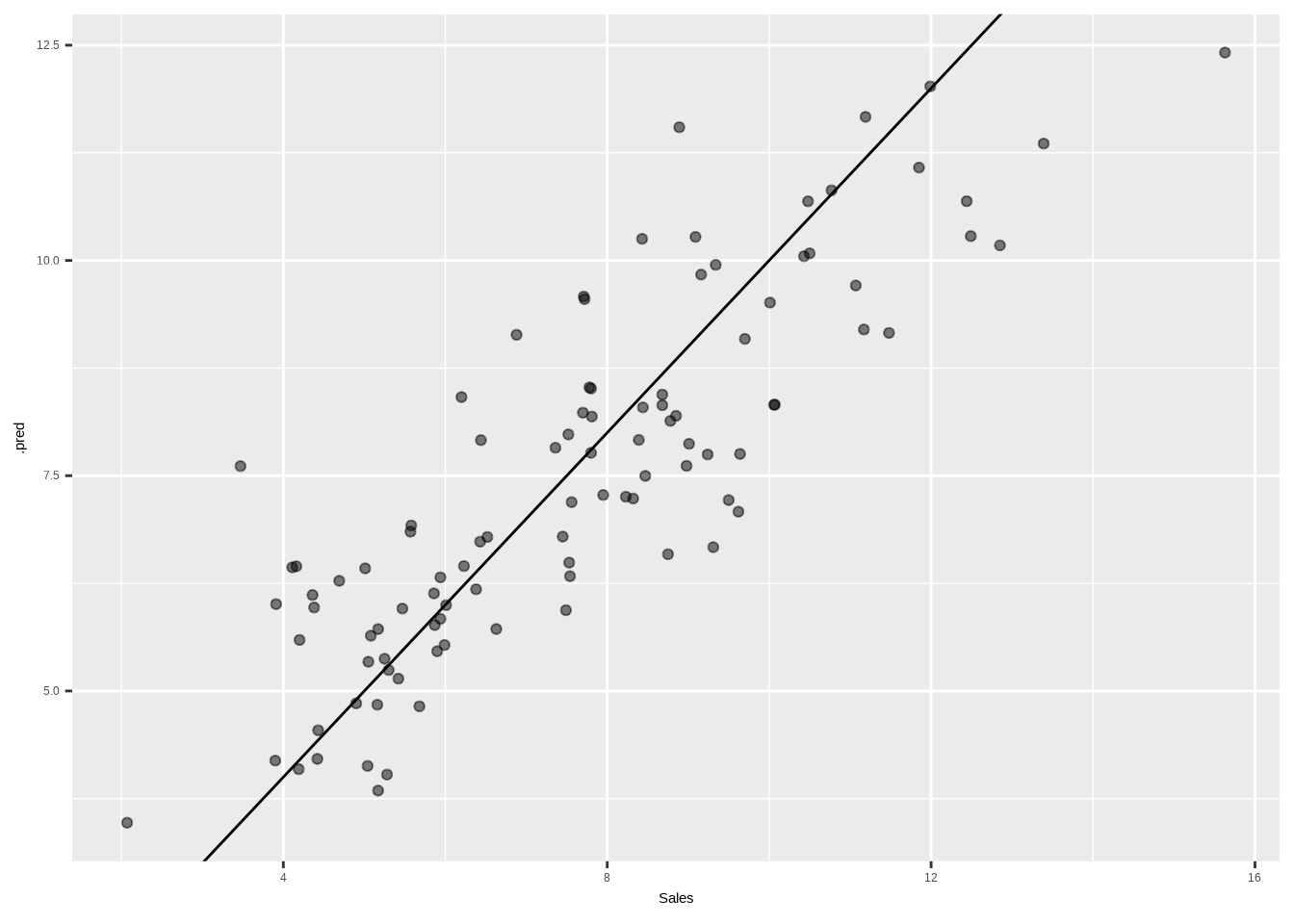

We can also create a quick scatterplot between the true and predicted value to see if we can make any diagnostics.

augment(bagging_fit, new_data = carseats_test) %>%

ggplot(aes(Sales, .pred)) +

geom_abline() +

geom_point(alpha = 0.5)